Introduction

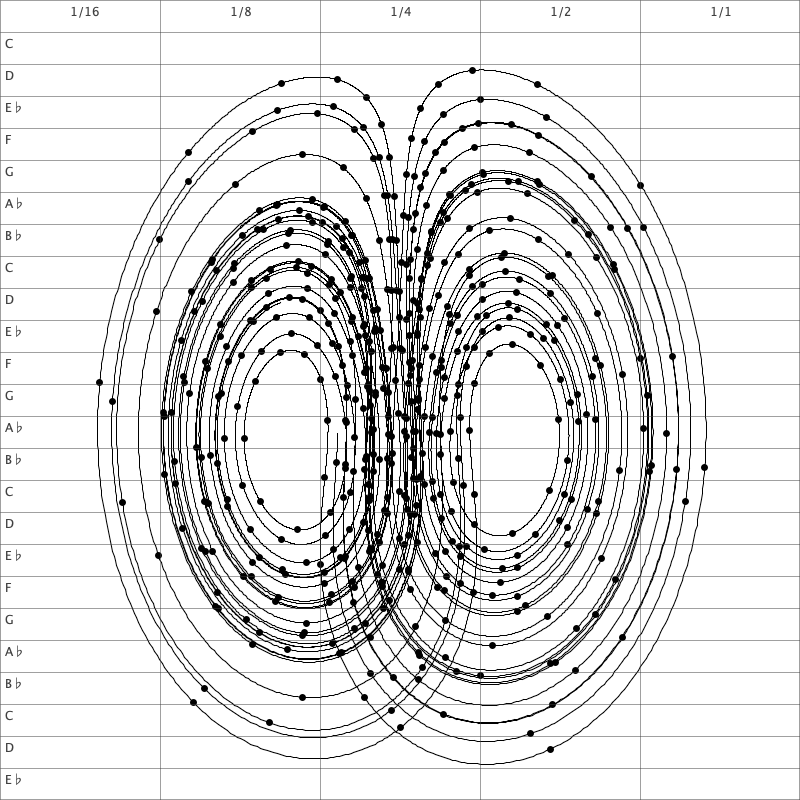

Most Lego instructions put bricks aligned with the grid, but a few of them uses bricks at angles, for example the Corner Garage. If we look at a Lego board as a coordinate system, and assume that the brick we want to put at an angle has one end in (0,0), then it is possible to end it at a stud at (a,b) if there is an integer c such that a^2 + b^2 = c^2. This is known as a Pythagorean triple, and it is known that there are infinitely many of these. The smallest one, which can also be used in Lego is (3,4,5), so a 6 stud piece can be put at an angle from a stud to the stud 4 studs to the right and 3 studs above. Note that we need a 6 stud piece to cover a distance of 5.

Near Pythagorean triples

There are only a few small Pythagorean triples usable in Lego construction, where the longest brick is 16 studs long. However, in the Corner Garage mentioned earlier, they use a triple (12, 12, 17) to produce a 45° angle, but this is not a Pythagorean triple since \sqrt{12^2 + 12^2} = 16,9706 \neq 17. The relative error for this Lego approved triple is 0.17%, so we use this as an upper bound on the triples computed here.

We may extend the list of triples further because there are Lego pieces which allow us to put a brick half-way between studs, so we do not need to restrict ourselves to integers but may include half-integers too. If we restrict the coordinates to be at most 15 units long, there are 44 triples. Sorted by angle they are:

\begin{align*}

1.91°&: (15, 0.5, 15),\newline

1.97°&: (14.5, 0.5, 14.5),\newline

2.05°&: (14, 0.5, 14),\newline

2.12°&: (13.5, 0.5, 13.5),\newline

2.2°&: (13, 0.5, 13),\newline

2.29°&: (12.5, 0.5, 12.5),\newline

2.39°&: (12, 0.5, 12),\newline

2.6°&: (11, 0.5, 11),\newline

2.86°&: (10, 0.5, 10),\newline

3.18°&: (9, 0.5, 9),\newline

15.64°&: (12.5, 3.5, 13),\newline

16.26°&: (12, 3.5, 12.5),\newline

16.93°&: (11.5, 3.5, 12),\newline

19.44°&: (8.5, 3, 9),\newline

20.14°&: (15, 5.5, 16),\newline

20.77°&: (14.5, 5.5, 15.5),\newline

22.62°&: (6, 2.5, 6.5),\newline

22.62°&: (12, 5, 13),\newline

25.71°&: (13.5, 6.5, 15),\newline

28.07°&: (7.5, 4, 8.5),\newline

28.07°&: (15, 8, 17),\newline

29.98°&: (13, 7.5, 15),\newline

30.96°&: (15, 9, 17.5),\newline

33.69°&: (7.5, 5, 9),\newline

33.69°&: (15, 10, 18),\newline

34.82°&: (11.5, 8, 14),\newline

35.13°&: (13.5, 9.5, 16.5),\newline

36.87°&: (2, 1.5, 2.5),\newline

36.87°&: (4, 3, 5),\newline

36.87°&: (6, 4.5, 7.5),\newline

36.87°&: (8, 6, 10),\newline

36.87°&: (10, 7.5, 12.5),\newline

36.87°&: (12, 9, 15),\newline

36.87°&: (14, 10.5, 17.5),\newline

38.42°&: (14.5, 11.5, 18.5),\newline

38.66°&: (12.5, 10, 16),\newline

38.99°&: (10.5, 8.5, 13.5),\newline

39.47°&: (8.5, 7, 11),\newline

40.24°&: (13, 11, 17),\newline

42.61°&: (12.5, 11.5, 17),\newline

43.6°&: (10.5, 10, 14.5),\newline

45°&: (8.5, 8.5, 12),\newline

45°&: (12, 12, 17),\newline

45°&: (14.5, 14.5, 20.5)

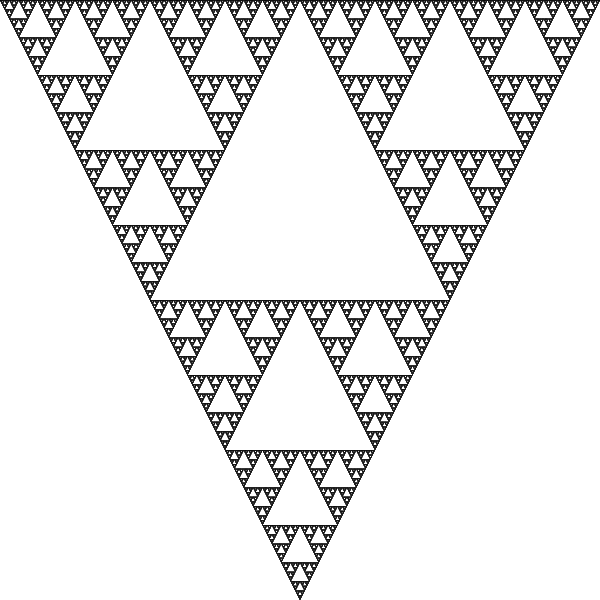

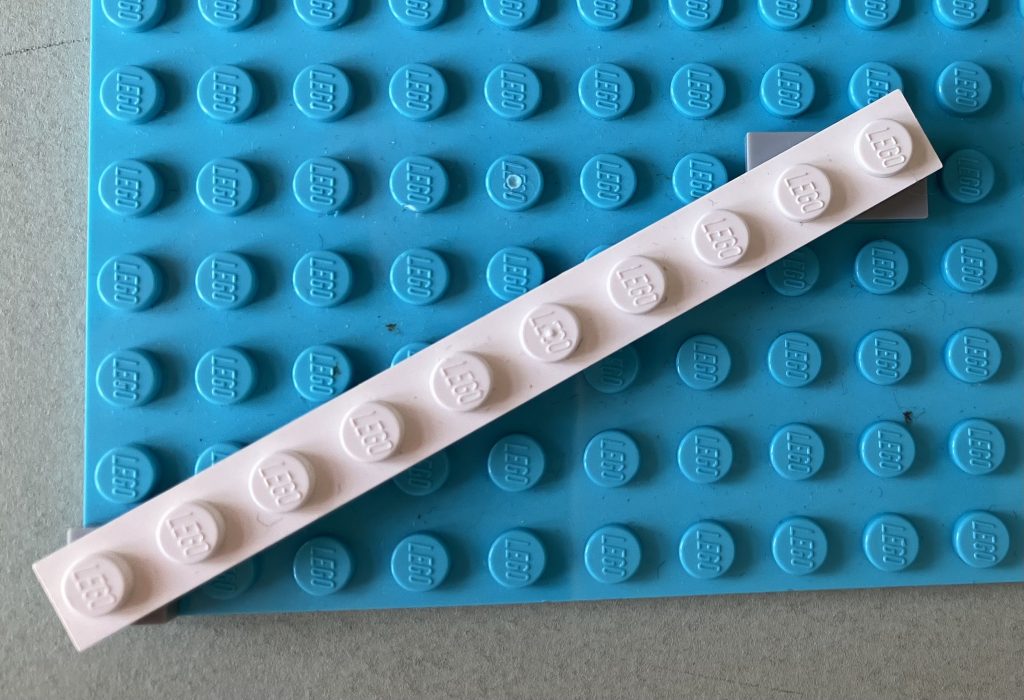

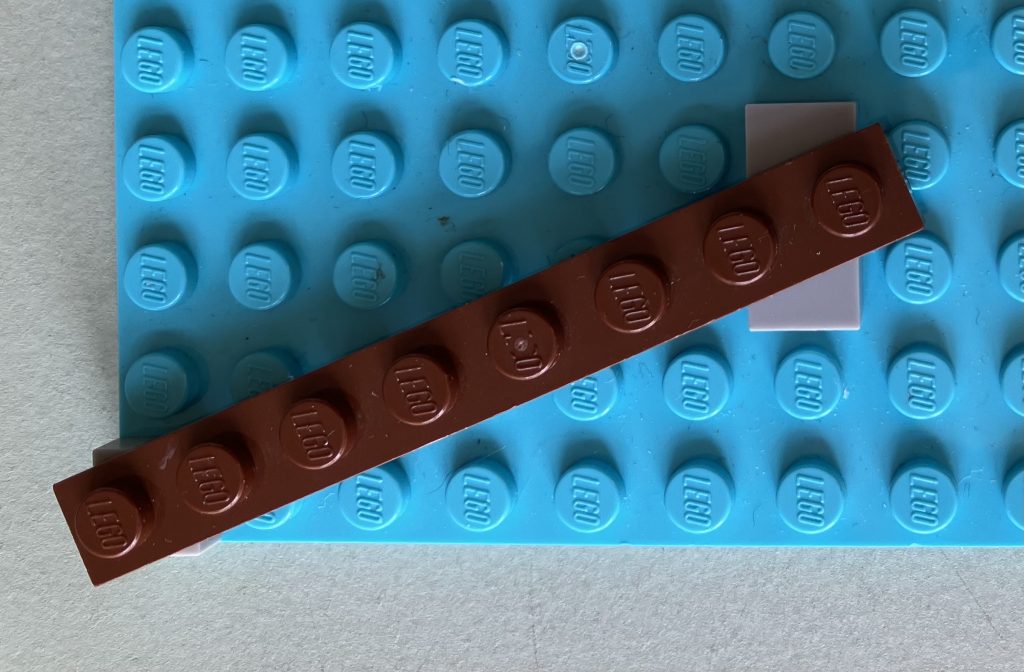

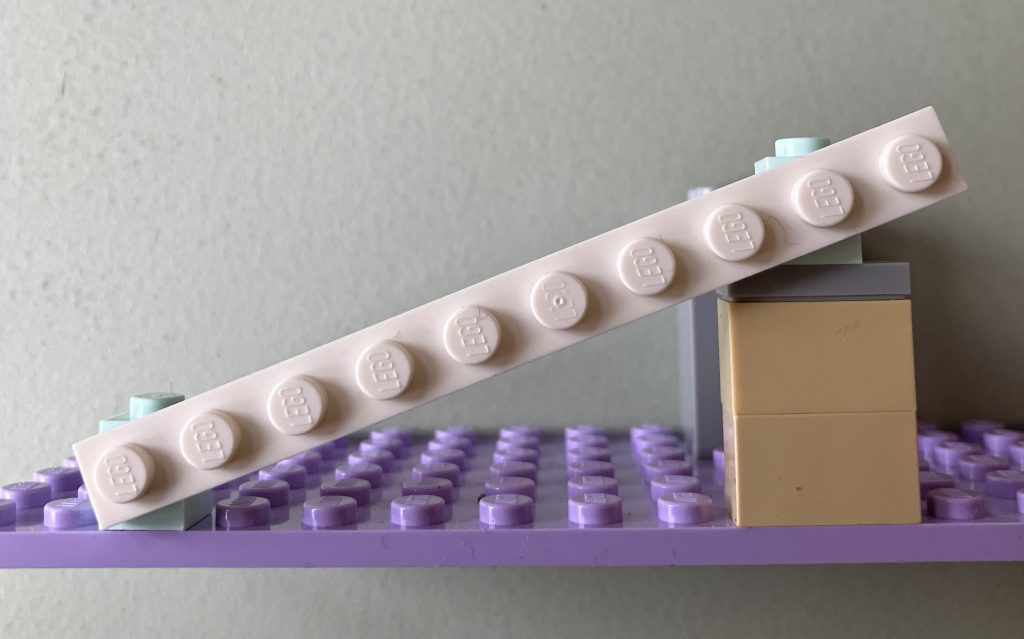

\end{align*}Note that only angles up to 45 degrees are included here, since larger angles can be constructed from symmetry. Below are pictures of two examples of the triples (7.5, 4, 8.5) (left) and (6, 2.5, 6.5) .

Vertical angles

The above example is for putting bricks horizontally on a plate. However, some bricks, like Lego Technic bricks or the “light holder” bricks, allows you to build vertical angles as well, because you can put a brick on the side of it.

The height of a brick and the with of one stud is, however, not equal, so the Pythagorean triples have to be computed differently. We still allow the horizontal axis to be integers and half-integers, as above, but for the vertical axis, we use the unit size of one place. If you’ve ever built with Legos, you probably know that three plates equals one brick, but it is also true that that five plates equal two studs. So the if we keep using one horizontal stud as a unit, the vertical coordinate must be a multiple of \frac{2}{5}. This gives the following list of 11 near Pythagorean triples:

\begin{align*}

2.3°&: (10, 0.4, 10),\newline

2.5°&: (9, 0.4, 9),\newline

2.9°&: (8, 0.4, 8),\newline

3.3°&: (7, 0.4, 7),\newline

17.7°&: (10, 3.2, 10.5),\newline

20.5°&: (7.5, 2.8, 8),\newline

23.6°&: (5.5, 2.4, 6),\newline

28.1°&: (7.5, 4, 8.5),\newline

31°&: (6, 3.6, 7),\newline

36.9°&: (8, 6, 10),\newline

40.4°&: (8, 6.8, 10.5),\newline

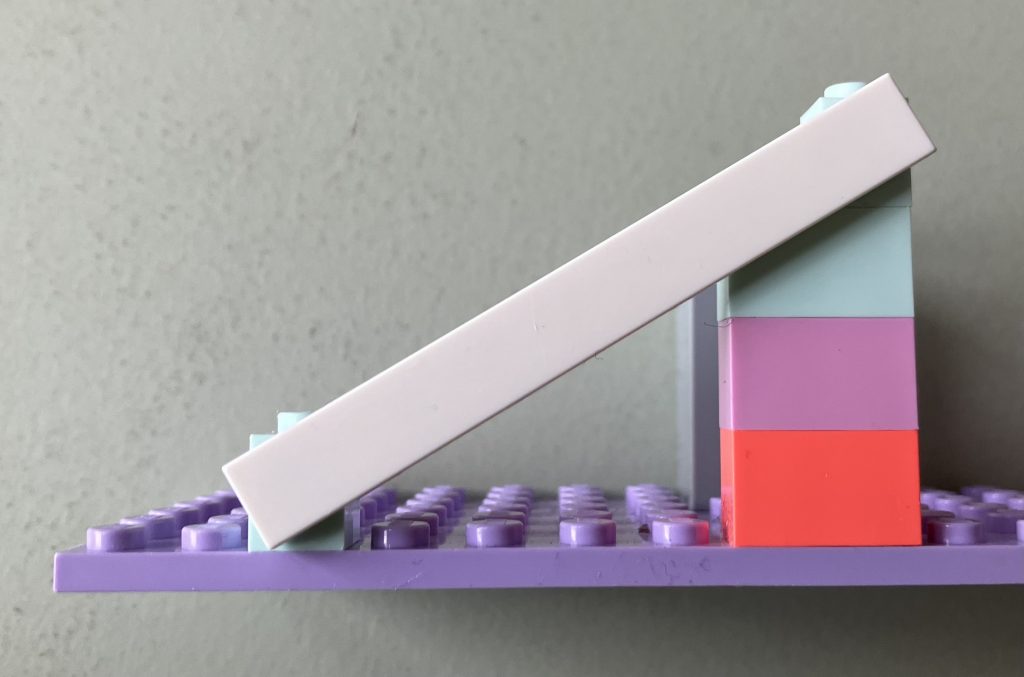

\end{align*}Below is a picture of a usage of the triples (6, 3.6, 7) and (7.5, 2.8, 8). Recall that 0.4 on the vertical axis corresponds to one plate and 1.2 is the full height of a brick.

Source code

The source code used to compute the triples is available here.